HiDream-O1-Image: Generate, Edit & Personalize Images at 2K Resolution

The only open-source AI image model that encodes raw pixels, text, and task conditions in one unified transformer — no external VAE, no disjoint encoders. Just describe it. Generate it. Right here.

Model Overview

What Is HiDream-O1-Image and Why It Outperforms Bigger Models

Most image models stack a VAE on top of a text encoder on top of a diffusion model. HiDream-O1-Image collapses all three into one.

HiDream-O1-Image is an 8-billion-parameter, pixel-native unified image generative model developed by HiDream-ai, open-sourced under the MIT License on May 8, 2026. Built on a Pixel-level Unified Transformer (UiT), it encodes raw pixels, text prompts, and task-specific conditions in a single shared token space — eliminating the external VAEs and disjoint text encoders found in traditional latent diffusion models.

The result is one model that natively handles text-to-image generation, instruction-based image editing, and subject-driven personalization at up to 2,048 × 2,048 resolution.

Pixel-Native Architecture — No VAE, No Compromise

Traditional latent diffusion pipelines compress pixels into a bottlenecked latent space before generation, causing fine-detail loss at high resolutions. HiDream-O1-Image operates directly on raw RGB pixel patches, meaning every detail in your 2K output was generated, not upscaled. You get sharper text rendering, tighter edge definition, and more faithful color reproduction — especially for commercial graphics and poster work.

Benchmark-Leading Performance at 7× Smaller Footprint

HiDream-O1-Image scores 0.90 on GenEval (compositional generation), 89.83 on DPG-Bench (dense prompt alignment), and 10.37 on HPSv3 (human preference) — outperforming GPT Image 2, DALL-E 3, and FLUX models that are up to 7× larger in parameter count. For teams that care about quality-per-dollar, this efficiency gap is significant.

Built-In Reasoning Agent for Complex Prompt Understanding

Standard text-to-image models break down when faced with implicit spatial logic, multi-object scenes, or layered narrative prompts. HiDream-O1-Image ships with an integrated Reasoning-Driven Prompt Agent (powered by Gemma-4-31B or any OpenAI-compatible API) that rewrites and logically enriches your raw instructions before generation — so your prompt goes in as you wrote it, and the model receives a precision-engineered directive.

Core Features

HiDream-O1-Image Features That Replace an Entire Image Production Pipeline

Six capabilities. One model. HiDream-O1-Image handles everything from text-to-image generation to multilingual text rendering — natively, without stitching tools together.

Text-to-Image Generation at Native 2048 × 2048

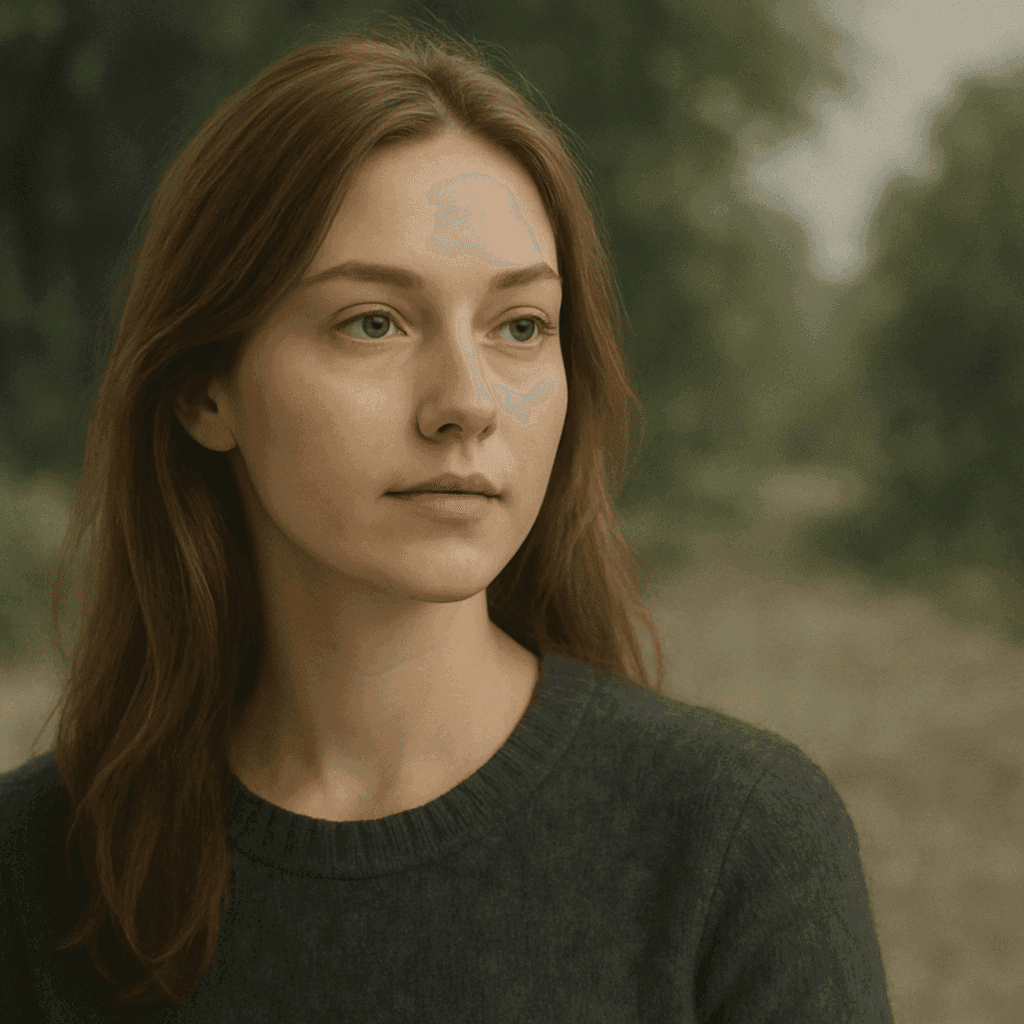

HiDream-O1-Image converts detailed text prompts directly into pixel-perfect images at up to 2,048 × 2,048 resolution — without upscaling artifacts. Because the model operates in pixel space rather than compressed latent space, fine details like fabric textures, architectural lines, and facial features remain sharp and true to your prompt. Ideal for commercial product shots, concept art, and editorial visuals.

Instruction-Based Image Editing with Natural Language

Forget manual masking and layer juggling in Photoshop. With HiDream-O1-Image, you describe the edit you want in plain English — "remove the earphones," "change the jacket to red," "add fog to the background" — and the model applies the modification while preserving the original composition and aspect ratio. One reference image plus one sentence is all you need.

Subject-Driven Personalization Across Multiple References

Maintaining a consistent character, product, or brand element across new scenes is one of the hardest problems in AI image generation. HiDream-O1-Image supports multi-reference personalization, accepting up to 12 reference images and intelligently mapping your specific subjects into entirely new environments while preserving their exact identity — face, outfit, logo, or product detail.

Precise Multilingual Visual Text Rendering

Most AI image generators mangle text inside images. HiDream-O1-Image achieves 0.979 (English) and 0.978 (Chinese) on LongText-Bench, handling up to 5 distinct text regions within a single composition. Whether you're producing English ad banners, bilingual product labels, or localized marketing graphics, text comes out legible and correctly positioned — no post-production required.

Reasoning-Driven Prompt Agent for Complex Scenes

Complex prompts with multi-object relationships, specific spatial logic, or layered storytelling regularly confuse standard diffusion models. HiDream-O1-Image's integrated Reasoning-Driven Prompt Agent — powered by Gemma-4-31B weights or any OpenAI-compatible API — thinks through your scene's structure before generation, automatically enriching your raw input into a precision-engineered prompt. The result: fewer generation retries, more usable first outputs.

ComfyUI & API Integration for Professional Workflows

HiDream-O1-Image is designed to drop into existing creative pipelines. The model supports ComfyUI node integration (BF16 / FP16 / FP8 precision options), local LoRA loading, and OpenAI-compatible API calls via the Prompt Agent. Whether you're building an automated content pipeline or an agency workflow, HiDream-O1-Image slots in without forcing you to rebuild from scratch.

Applications

What Can You Create With HiDream-O1-Image?

From e-commerce assets to storyboards, HiDream-O1-Image handles the visual workloads that used to take a full production team.

Product Photography at Scale

Generate clean, high-resolution product shots against any background — no physical studio, no photographer. HiDream-O1-Image preserves every product detail at 2K, ready for Amazon, Shopify, or DTC listings.

On-Brand Ad Creatives in Minutes

Brief the model the way you'd brief a designer: describe the scene, color palette, and messaging. The built-in Reasoning Agent ensures spatial layout and object placement match your creative direction — no prompt engineering degree needed.

Character-Consistent Storyboards

Use multi-reference personalization to keep your protagonist's face, outfit, and props consistent across every panel. HiDream-O1-Image handles scene transitions that break other models.

Multilingual Visuals Without Retouching

Generate posters, banners, and social graphics with English, Chinese, or multilingual text rendered natively inside the image — no separate type layer needed. HPSv3 scores confirm human-preference quality that matches your audience.

Natural Language Photo Editing

Hand the model a reference photo and a plain-English edit instruction. Remove objects, change clothing colors, swap backgrounds, or adjust lighting — without touching a masking tool.

Open-Weight Model Integration

Pull the MIT-licensed weights from GitHub, quantize to FP8 for ~10GB VRAM footprint, and integrate via ComfyUI or API. HiDream-O1-Image is the highest-ranked open-weight model on the Artificial Analysis Text-to-Image Arena, making it a credible production-grade choice.

Model Comparison

HiDream-O1-Image vs. FLUX, DALL-E 3 & GPT Image 2: Full Comparison

Head-to-head on the metrics that matter for production use: quality, resolution, prompt accuracy, licensing, and parameter efficiency.

| Metric | HiDream-O1-Image | FLUX.2 Dev | DALL-E 3 | GPT Image 2 |

|---|---|---|---|---|

| Parameters | 8B | 56B | Closed | Closed |

| Native Resolution | 2048 × 2048 | 1024px | 1024px | 1024px |

| Architecture | Pixel-level UiT (no VAE) | Latent DiT + VAE | Closed | Closed |

| GenEval Score | 0.90 | 0.66 | 0.67 | 0.89 |

| DPG-Bench Score | 89.83 | 83.79 | 83.50 | — |

| HPSv3 Score | 10.37 | — | — | 10.21 |

| Text Rendering | Native (0.979 EN) | Poor | Moderate | Moderate |

| Image Editing | Native instruction-based | Separate model | Via API | Yes |

| Subject Personalization | Multi-reference (up to 12) | No | No | Limited |

| License | MIT (commercial) | Apache 2.0 | Closed / paid | Closed / paid |

| ComfyUI Support | Yes | Yes | No | No |

| Prompt Reasoning Agent | Built-in | No | No | No |

| Open Weights | Yes (GitHub) | Yes | No | No |

HiDream-O1-Image delivers higher GenEval and HPSv3 scores than DALL-E 3 and GPT Image 2 at 7× fewer parameters, with native 2K resolution and MIT licensing that closed models can't match.

FAQ

Frequently Asked Questions

Real questions from creators, developers, and agencies — answered directly, without fluff.

01What is HiDream-O1-Image?

HiDream-O1-Image is an open-source, 8-billion-parameter AI image generation model built on a Pixel-level Unified Transformer (UiT). Released by HiDream-ai under the MIT License on May 8, 2026, it supports text-to-image generation, instruction-based image editing, and subject-driven personalization — all in a single model without external VAEs or disjoint text encoders, at up to 2,048 × 2,048 native resolution.

02Can I run HiDream-O1-Image online without a GPU?

Yes. You can generate directly on this page — no GPU, no install required. For local deployment, a CUDA-capable GPU is necessary; the FP8-quantized variant runs on GPUs with as little as ~10GB VRAM (e.g., RTX 3080, RTX 4070), making it accessible outside data-center environments.

03What's the difference between HiDream-O1-Image Full and the Dev variant?

The Full model uses 50 sampling steps with classifier-free guidance (CFG 5.0) and produces the highest photographic detail and realism — best for final production outputs. The Dev variant uses a distilled 28-step schedule with CFG 0.0, converging faster for rapid iteration and prototyping. Both checkpoints share the same 8B parameter count and MIT license.

04How does HiDream-O1-Image compare to FLUX and DALL-E 3?

HiDream-O1-Image outperforms FLUX.2 Dev on GenEval (0.90 vs. 0.66) and DPG-Bench (89.83 vs. 83.79) while using 7× fewer parameters. It also outscores GPT Image 2 and DALL-E 3 on HPSv3 human preference (10.37 vs. 10.21 and lower). Unlike those models, HiDream-O1-Image is open-weight, MIT-licensed, and natively generates at 2K resolution without upscaling.

05Can I use HiDream-O1-Image-generated images commercially?

Yes. HiDream-O1-Image model weights and code are released under the MIT License, which permits personal, research, and commercial use. You should review the full MIT License terms in the GitHub repository and ensure your use case complies with applicable content policies on any inference platform you use.

06Does HiDream-O1-Image support image editing, not just generation?

Yes. HiDream-O1-Image natively supports instruction-based image editing — you provide a reference image and a plain-English instruction (e.g., "remove the earphones"), and the model applies the change while preserving the original composition. This is built into the same model that handles text-to-image, with no separate editing pipeline required.

07How does the built-in Reasoning Agent work?

The Reasoning-Driven Prompt Agent is a separate wrapper — not part of the diffusion model itself — that runs a language model (Gemma-4-31B or any OpenAI-compatible API) over your raw instruction before image generation begins. It analyzes your prompt's implied spatial logic, object relationships, and attributes, then rewrites it into a detailed, structured directive. This dramatically reduces prompt engineering effort for complex scenes.

08Will my images or prompts be used to train the model?

HiDream-O1-Image is an open-weight model you run locally or via cloud inference. If you self-host using the GitHub weights, your prompts and generated images are fully under your control and are not transmitted anywhere. When using our hosted service, please review our Privacy Policy for data handling practices.

09Does HiDream-O1-Image support languages other than English?

Yes. HiDream-O1-Image achieves 0.979 (English) and 0.978 (Chinese) on LongText-Bench for visual text rendering within generated images. The Reasoning-Driven Prompt Agent currently operates in English; non-English instructions may benefit from translation before input. Generated multilingual text inside images — banners, labels, posters — is natively supported.

Start Creating

Experience HiDream-O1-Image in Your Browser — No GPU Required

HiDream-O1-Image is free to try — right now, no account needed. Type a prompt, see what the #8-ranked open-source image model on the Artificial Analysis Arena can do for your creative work. When you're ready to scale, Pro removes the watermark, unlocks 2K full resolution output, and includes commercial rights for everything you generate.

Free generations include a watermark. Upgrade to Pro for full 2K output and commercial rights.